by Mary Lou Zoback Friday, January 20, 2012

Many of the world's largest cities, such as Caracas, Venezuela, are hotspots of extreme poverty and most vulnerable to catastrophes following natural hazards. Abby Baca, RMS

U.S. Geological Survey seismologist Lucy Jones and her son, Niels Hauksson, watch the Station Fire near their home in La Cañada Flintridge, Calif. Egill Hauksson

The Station Fire in California burned dangerously close to communications towers and the Mount Wilson Observatory. Todd Hoefen, USGS

More than 65,000 people were left temporarily homeless by the magnitude-6.3 earthquake that struck L'Aquila, Italy, in April. Walter Mooney, USGS

The L'Aquila earthquake crumpled both medieval and modern infrastructure. Walter Mooney, USGS

The global financial disaster of 2009 has many parallels with catastrophic natural hazards. It struck pretty much without warning, its impact was greatly exacerbated by an incredibly complex system of cascading consequences, and finally, mechanisms supposedly in place to mitigate the worse impacts (regulations, in the case of the financial system) failed. There was awareness that such a meltdown could theoretically occur, but it was considered such a low-probability event that it was evidently not worth planning for.

Much the same could be said about any catastrophic natural disaster — like Hurricane Katrina’s devastating effects in 2005 or the 2004 Sumatra earthquake and tsunami. The big issue is not actually in the prediction of these natural hazards, but in the mitigation — in preventing the catastrophe from occurring. Mitigation is becoming increasingly more difficult due to a number of factors, from population growth to climate change. But that’s where geoscientists must step in and get involved in helping society reduce its risk to natural hazards.

Natural hazards are the result of active geologic, meteorological and hydrologic processes acting, sometimes violently, on and in Earth. These hazards only become a disaster where humans and our infrastructure meet nature. We can reduce our risk to natural disasters either by reducing our exposure or by reducing the vulnerability of the population and built environment.

Unfortunately, two significant factors conspire to confound our efforts to reduce the risk from natural hazards: demographic trends increasing and concentrating the exposure, and climate change effects increasing both the vulnerability and the related hazards. Many of the world’s major cities are concentrated in areas that face inherent natural hazards — and more and more people are moving into harm’s way.

More than half of the 25 largest megacities globally are subject to significant earthquake hazards; a similar number are situated on river floodplains and are subject to frequent flooding. Consider monsoons in Mumbai, India; earthquakes in Mexico City, Mexico; earthquakes and volcanoes in Jakarta, Indonesia; cyclones, earthquakes and tsunamis in Dhaka, Bangladesh; droughts and famines in Addis Ababa, Ethiopia.

Add in the changes expected from climate change — increasing frequency and severity of weather-related events from droughts to storms to heat waves, as well as rising sea levels — and you get a recipe for disaster, especially given that one-tenth of the global population, and one out of every eight urban dwellers, lives in coastal areas with elevations below 10 meters above sea level. Continued coastal development coupled with climate change exposes more residents to hydrologic and meteorological hazards — storms, flooding and cyclones. The lowest-lying communities are particularly vulnerable to sea-level rise as well, even in the United States.

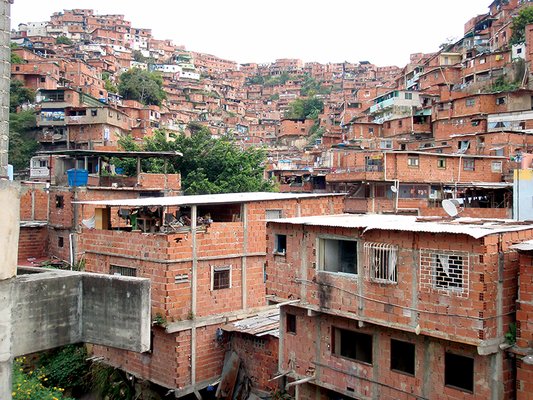

The problem is intensified by the fact that many of the world’s largest cities are hot spots of extreme poverty, where millions of people live in informal and substandard housing. An estimated 1 billion people, one-sixth of the world’s population, now live in shanty towns. By 2030, more than 2 billion people will be living in slums. These areas are especially prone to natural disasters because the buildings’ flimsy construction can’t stand up against nature’s fury. As Gregory van der Vink argues in the January issue of EARTH, reducing a population’s vulnerability to climate change and natural hazards can also reduce a population’s vulnerability to poverty, so risk-reduction efforts will also improve economic well-being in many parts of the world.

However, building resiliency to natural hazards is not just an issue in the developing world, it is a problem for all societies. A couple of decades ago, a California congressman asked a seismologist three simple questions: What is the scope of the earthquake problem in California? What can we do about it? And how much will it cost? More than 20 years later, we in the earth science community still don’t have these answers. We have determined the probabilistic seismic hazard — expressed as a percentage likelihood of exceeding a given amount of ground acceleration in 50 years — but that may not be a very clear elucidation of hazard for most policymakers and their decision time frame. And therein lies the mismatch between the information decision-makers want and what we, as earth scientists (and engineers), are prepared to provide. The simple questions above could similarly be posed about other hazards anywhere.

An even more striking mismatch exists between the decision-makers' desired input and scientists’ information in terms of “predictions.” Fortunately, relatively sophisticated and robust meteorological and hydrologic models (calibrated with many years of data) now provide reliable short-term forecasts of the timing, location and magnitude of a hurricane landfall or flood event. The relative certainty of these “predictions” and the several-day window of warning provide the opportunity (hopefully) for informed response activities more akin to what policy-makers desire.

However, short-term forecasts of solid earth natural disasters, particularly earthquakes and volcanic activity, have proven considerably more challenging. Even though an increasing intensity of small earthquakes offers evidence of an imminent volcanic eruption, for example, a true deterministic prediction in which a specific time, location and magnitude are prescribed ahead of time has not been possible for any natural hazard.

The deadly April 6, 2009, magnitude-6.3 earthquake in L’Aquila, Italy, raised the dilemma of earthquake prediction with uncertain information in a very public forum. Seismicity had increased in the region three months before the earthquake struck. A month before the earthquake, a lab technician told the mayor of a nearby town that an earthquake was imminent. The scientist’s supporting data and methodology as well as information on baseline natural variation in the signal (in this case, an increase in the amount of radon gas) had not been published and were not available for scrutiny. His prediction window came and went, and both scientists and government officials dismissed his work, saying earthquake prediction is not possible at this time. Then the moderate-sized earthquake struck, killing about 300 people and leaving 65,000 people temporarily homeless. Media reports raised alarm over why this “prediction” was not heeded.

Italian seismologists have known for a number of years that seismicity in Italy clusters in time and space. They and others have been developing short-term forecasting algorithms based on the notion that increased activity of small earthquakes in a region can quantitatively prescribe the increased probability of larger events to follow. They were within months of establishing an institute for testing such algorithms in Italy when the L’Aquila earthquake struck. In fact, following the L’Aquila earthquake, a forecasting scheme by scientists at the Istituto Nazionale di Geofisica e Vulcanologia successfully provided daily probabilistic forecasts of the location and size of aftershocks to the Italian Civil Defense.

In August 2009, an independent earthquake forecasting evaluation center for Italy was established as part of an international collaboration of such centers, called Collaboratory for the Study of Earthquake Predictability (CSEP), led by the Southern California Earthquake Center. This program of rigorous, independent, objective testing of various proposed forecasting methodologies holds great promise to determine if there is a scientific basis “to raise an alert” and take some preventative actions, even if we cannot make deterministic predictions with certainty.

When (if?) we gain confidence in forecasting methodologies that provide a short-term (months to days) increase in earthquake probability, how should we cast that information to be of use to decision-makers? A small but growing number of earth scientists advocate that we must help decision-makers constrained by finite resources balance their willingness to save lives against, for example, the economic costs of disruption due to evacuation. Other factors such as the loss of credibility and potential liability for false alarms also must be considered.

The appropriate cost-benefit analysis on a longer timescale, such as posed by the California congressman’s questions, is more vexing. The timescale for mitigation through measures such as large-scale retrofitting generally exceeds the elected cycle of politicians; hence, costly mitigation decisions are easier to postpone than deal with. However, residents of the San Francisco Bay Area have never turned down a bond measure targeted at retrofitting major infrastructure (water systems and the mass transit system), indicating they are willing to tax themselves now to ensure functionality post-quake.

However, a large inventory of buildings at risk for collapse exists throughout the San Francisco Bay Area and in many parts of the United States, as well as in the rest of the world, which undoubtedly has less available money to deal with such expensive endeavors. So perhaps as a first step, we should work with decision-makers to institute a safety risk ranking system for privately owned buildings that might provide an objective basis for a cost-benefit analysis to mitigate casualties and post-quake disruption. Beginning these dialogues about the potential costs of mitigation and quantifying the potential avoidable losses would be useful and helpful for both earth scientists and decision-makers.

As earth scientists, if we want to truly help society reduce risk, we need to work with disaster-preparedness experts, sociologists and economists to be certain that while we raise awareness of hazard and risk, we also empower society to deal with these risks by providing information on (often simple) steps society can take to reduce our vulnerability to the risk. The Great California ShakeOut earthquake preparedness drill held in October is a good example of this. Similar drills are a positive low-cost, low-disruption action that could be taken during times of increased probability of a natural hazard event.

Earth scientists should actively support independent methodology evaluation centers, such as CSEP, that are reviewing short-term forecasting, which will allow us to objectively determine if some methodologies provide reliable, potentially actionable information. Finally, and most importantly, we should actively engage decision-makers in framing the questions to be answered and then provide quantitative information on risk or short-term increases in probability of an event, which, even if imperfect, can inform decisions that balance the cost of action with saving lives and future disruption.

© 2008-2021. All rights reserved. Any copying, redistribution or retransmission of any of the contents of this service without the expressed written permission of the American Geosciences Institute is expressly prohibited. Click here for all copyright requests.