by Timothy Oleson Wednesday, September 23, 2015

Arthur Holmes circa 1912. Credit: Wikimedia Commons.

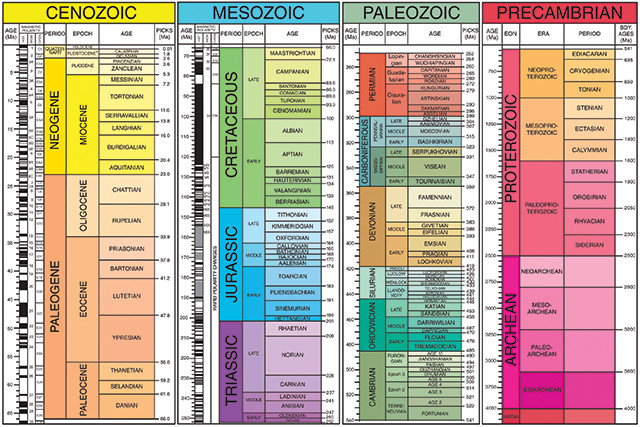

Ask a geologist when the Paleogene Period started and odds are very good the answer will be about 65.5 million years ago. Ask about the Carboniferous and you’ll likely hear 359 million years ago. Ask how old Earth is and the answer will almost invariably be 4.55 billion years, give or take a few tens of millions of years. Today, most geologic ages are well established and widely agreed upon. But the geologic timescale wasn’t always so settled.

Prior to last century, estimates of Earth’s age — which of course constrained the ages of the various geologic periods recognized at the time — ranged broadly from thousands of years to more than 1 billion years. Whereas biblical chronologies favored the shorter reaches of that range, scientific estimates favoring older ages were based on a variety of terrestrial and celestial observations. But it wasn’t until the advent of radiometric dating in the early 20th century that scientists began to pin down the true timing of Earth’s history.

The eventual ascendance some years later of radiometric dating over other methods for determining geologic time owes more to British physicist and geologist Arthur Holmes than perhaps any other scientist. It was Holmes who, with his vital early research, improved the technique and propelled it forward after early attempts faltered. Elucidating the geologic timescale became his life’s work; it was an effort that began in earnest with the publication in March 1913 of his now-famous first book, “The Age of the Earth.”

The title page of the first edition of Holmes' "The Age of the Earth," published in 1913 by Harper and Brothers. Credit: Holmes, A., 1913, "The Age of the Earth," New York, Harper and Brothers.

Beginning in the late 17th century with Nicolas Steno and continuing through the next two centuries, scientists began recognizing that distinct rock strata represented different periods of time in Earth’s history. The strata were often distinguished by their unique fossil assemblages and named based on the locality where they were first described. Silurian-aged rocks, named for an ancient tribe of Welsh Celts, contained abundant fossils of jawless fish as well as early coral fossils. Devonian-aged rocks, named for the English county of Devon, contained skeletons of jawed fish and the first terrestrial tetrapods and were typically found above Silurian rocks in the stratigraphic column.

These sorts of observations allowed geologists of the day to work out the relative ordering of geologic periods: Devonian rock, containing more complex fossils and lying above Silurian rock as it typically did, must have been more recent, for example. But there was still the question of how long each period lasted and, indeed, how long Earth had been around.

By the latter half of the 19th century, European and American scientists had largely come to accept, given the slow rates of geological processes they observed, that Earth must be far older than espoused by biblical tradition. Answering the question that emerged from that realization — how old is it then? — led to a multitude of guesses based on a variety of different observations.

Physicists, astronomers and geologists each had their methods. Some, like Lord Kelvin, estimated the rate at which Earth was thought to be cooling from an initially molten state, while others used an early understanding of the tidal interactions between Earth and the moon. Geologists at the time — T. Mellard Reade, John Phillips and Charles Doolittle Walcott, to name a few — preferred calculations based on observations of salt accumulation in the oceans (and assuming they’d started as freshwater bodies) or rates of sediment deposition and stratigraphic thicknesses.

Despite tremendous debate and disagreement over the accuracy and relevance of each methodology, estimates from the various techniques mostly clustered between tens of millions and several hundred million years, and “approximately 100 million years” emerged as the de facto value for many scientists. Although each of these approaches offered vast improvements in dating, and collectively represented a fundamental sea change in the scientific understanding of the world, each had its flaws. It would take the discovery of a demonstrably predictable phenomenon, radioactivity, to really expose the scope of Earth’s history.

Not long after the first detections of radioactivity by Henri Becquerel in 1896 and by Pierre and Marie Curie in 1898, a handful of scientists began to see its potential for geologic dating. Ernest Rutherford and Frederick Soddy, working together in the first years of the 20th century, found that radioactive elements decayed at predictable rates. Specifically, they were the first to suggest that helium found in rocks was a decay product of radioactive elements such as uranium, and Rutherford proffered the first radiometric age for a mineral in 1905: 497 million years for a sample of fergusonite.

Also in 1905, Robert Strutt, then a researcher at Cambridge University, began analyzing the helium content of radioactive minerals from numerous samples. He recognized that helium dating was flawed because, as a gas, helium is prone to escape from rocks. Thus, it could only be counted on to give minimum age estimates. When, in 1910, a 20-year-old student named Arthur Holmes signed on to work in his lab, now at London’s Imperial College, Strutt asked Holmes to pursue a more reliable method for determining radiometric ages.

Holmes' 1913 timescale from "The Age of the Earth." Credit: Holmes, A., 1913, "The Age of the Earth," New York, Harper and Brothers.

Holmes arrived at Imperial College on scholarship in 1907 and spent two years as a physics student, followed by a year in geology. From an early age, he’d developed a keen interest in the latter, especially what it had to say about the age of the planet. Upon entering Strutt’s lab, Holmes picked up on the work of American chemist Bertram Boltwood, who had established that lead was the final decay product of radioactive uranium. What’s more, lead was a solid — meaning it couldn’t escape from a rock as helium did — and lead-uranium ratios in many samples followed a regular trend that appeared to correlate, at least in a relative way, with the minerals’ ages. By 1907, Boltwood had calculated and published lead-uranium radiometric ages for 10 samples.

Holmes saw tremendous potential in Boltwood’s technique, as well as room for improvement. Working with nepheline syenites collected in Norway and known to be of Devonian age, Holmes painstakingly removed the uranium-bearing minerals and analyzed their lead-uranium ratios. Using the most up-to-date decay rates for uranium and radium, an intermediate decay product of uranium on the way to lead, he established an age of 370 million years for the syenites. He also recalculated Boltwood’s dates, adjusting each of the American’s figures by as much as 100 million years. After less than a year of research, Holmes published his results in the Proceedings of the Royal Society of London in 1911.

Importantly, as Holmes biographer Cherry Lewis notes in a chapter of the Geological Society’s 2001 Special Publication, “The Age of the Earth: From 4004 B.C. to A.D. 2002,” the young Holmes “realized there was no point in simply having an age for its own sake. To him the only thing that mattered was that each radiometric date was a new control point on the geological timescale, and so to be of use each date must be assigned to a geological period.” With his 1911 paper, Holmes became the first person to confidently assign radiometric dates to a few geologic ages based on the stratigraphic localities from which the dated samples had been taken.

During a six-month stint in Africa prospecting for a mining company, and away from research, Holmes found no minerals of economic consequence but plenty of fascinating Precambrian rocks to study and date. His interest in Earth’s oldest rocks and in further graduating Earth’s geologic history had only been strengthened. With his paper having been well-received by the Royal Society, he was soon hired back on at Imperial, where he resumed his research and began work on what would become a masterpiece of the geologic literature.

The Geological Society of London's Burlington House, where Holmes' 1911 paper was presented to members of the Royal Society by his advisor Robert Strutt. Credit: ©Alan Levine, Creative Commons Attribution 2.0 Generic.

“The Age of the Earth” was published 100 years ago this month in March 1913. Holmes’ dramatic opening to the preface set the stage not only for the book’s subject but its narrative style, uncommon for science books of any era: “It is perhaps a little indelicate to ask of our Mother Earth her age, but Science acknowledges no shame and from time to time has boldly attempted to wrest from her a secret which is proverbially well guarded.”

He began by detailing the history of Earth’s “time problem,” beginning with the efforts of ancient peoples and working up past James Ussher — the Irish bishop who in 1650 famously declared that Earth had been created in 4004 B.C. — as well as William Smith, Charles Lyell, Lord Kelvin and others, until he reached the present-day scientific arguments of the early 20th century. One by one, he laid out each of these in great detail, including their strengths and flaws as he saw them.

In the concluding chapter he wrote, “Of the various methods which have been devised to solve the problem of the earth’s age, only two, the geological and the radioactive, have successfully withstood the force of destructive criticism.” These two were the so-called “hourglass methods”: “In the one the world itself is the hour-glass, and the accumulating materials are salt, the sedimentary rocks and calcium-carbonate. … In the other case the accumulating materials are helium and lead, and the hour-glass is constituted by the minerals in which they collect.”

The usefulness of both methods was rooted in the precept of uniformitarianism — espoused in a geological context first by James Hutton in the 18th century and later popularized by Lyell — that the rates of the processes involved are constant over time. But, as Holmes noted, both cannot be simultaneously correct, for “if we favour the uniformity of geological processes … then we must reject uniformity of radioactive disintegration.” As the latter was tantamount to believing “that the laws of physics and chemistry vary with time,” Holmes unquestionably favored the use of radioactive minerals, which, he noted, “are clocks wound up at the time of their origin.”

Holmes’ main intent with “The Age of the Earth” was evidently to introduce the issue to a broad audience — the book was published by Harper and Brothers, not a strictly scientific imprint — and to lay out a case for radiometric dating as the way forward for accurately gauging Earth’s history. And indeed, it is recognized as the first attempt to do so, at least with such thoroughness and clarity.

Additionally, though, Holmes included in the book what is often considered the first geological timescale. Whereas his 1911 paper listed a handful of ages for the Precambrian up to the Carboniferous — perhaps making that the first, albeit partial, timescale — in the book he gave the most complete scientific account yet of Earth’s entire history based on radiometric dating, starting from the Archean and stretching up through the relatively recent Pleistocene. And he reiterated the ages of the oldest-known rocks as about 1.6 billion years, far older than most geologists of the time typically conceded. These bold statements, along with a style of writing that “endeared him to his readers both inside and outside the profession,” as Lewis writes, have made “The Age of the Earth” a milestone not just for Holmes but for geology.

One hundred years after Holmes' original was published, the modern geologic timescale continues to be revised. The Geological Society of America released its most recent version in December 2012. Credit: Walker, J.D., Geissman, J.W., Bowring, S.A., and Babcock, L.E., compilers, 2012, Geologic Time Scale: Geological Society of America, doi: 10.1130/2013.CTS004R3C.

Of course, the book did not convince everyone. As some scientists correctly pointed out, the basics of radioactivity were not fully understood. Radiometric dating itself had only been around for a decade and was hardly a time-tested method. Irish geologist John Joly, a long-time proponent of dating based on salt accumulation rates in the oceans, suggested, for example, that uranium might have decayed at different rates through time, thus invalidating radiometric dates altogether.

The early failures of helium dating had also soured many scientists on the technique as a whole, and there were relatively few samples — even by Holmes’ assessment — that could be reliably dated by lead-uranium ratios. And then there was simply the inertia of pride and tradition: Many geologists disliked the seeming encroachments into their field by physicists and chemists.

But proponents of the new-school technique were unbowed. With every advancement in the understanding of radioactivity — the detection of the different isotopes of lead and then uranium, the discovery of additional decay series, the development of increasingly accurate analytical techniques and so on — they refined radiometric dating. And with each passing decade resistance waned, so that by 1956, when Clair Patterson at Caltech determined Earth’s age as about 4.55 billion years based on lead-lead dating of meteorites, the debate was not about whether radiometric dating was fundamentally sound but rather about the details of its application.

Prior to the development of radiometric dating, geologists based estimates of geologic time on other methods, including correlating known stratigraphic thicknesses to observed rates of sedimentation. Credit: ©Anna (cornishwhippet), Creative Commons Attribution-NonCommercial-NoDerivs 2.0 Generic.

Throughout his career, Holmes was at the forefront of research and innovation in his field. He continually updated and published new geologic timescales, and he revised his estimate of Earth’s age several times, from the initial 1.6 billion years to 3 billion and 3.35 billion years before eventually arriving, separately from Patterson, at 4.5 billion years. Beyond his work on radiometric dating, he also made important contributions to the understanding of mantle convection and the motions of the continents.

In addition to “The Age of the Earth,” Holmes wrote the also-famous “Principles of Physical Geology,” first published in 1944. He was awarded the Penrose Medal from the Geological Society of America and the Wollaston Medal by the Geological Society of London, both in 1956, and an award from the European Geosciences Union is now named for him. Perhaps the most fitting accolade he received, however, considering his lifelong quest, was bestowed on him by his collaborator Alfred Nier, who dubbed Holmes the father of geological timescales, a title he most certainly earned.

© 2008-2021. All rights reserved. Any copying, redistribution or retransmission of any of the contents of this service without the expressed written permission of the American Geosciences Institute is expressly prohibited. Click here for all copyright requests.